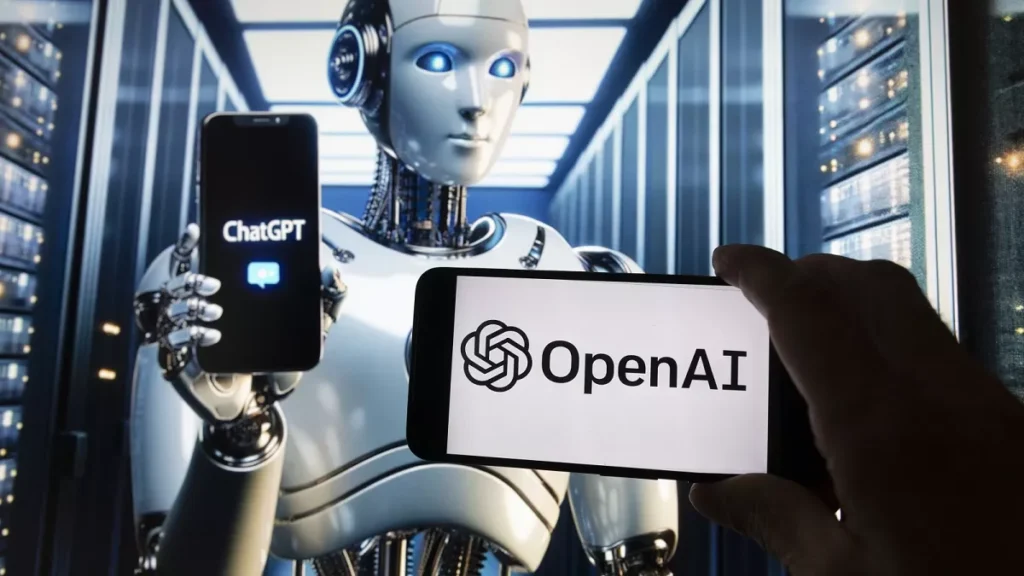

OpenAI has revealed that more than a million ChatGPT users have engaged in conversations indicating potential suicidal thoughts or intent.

The company shared this alarming statistic in a blog post published on Monday, stating that approximately 0.15 percent of ChatGPT users display “explicit indicators of potential suicidal planning or intent.”

Given that ChatGPT now has over 800 million weekly users, this figure translates to roughly 1.2 million people. OpenAI also reported that about 0.07 percent of weekly active users, nearly 600,000 individuals, show signs of possible mental health crises, including psychosis or mania.

The discussion around AI and mental health intensified following the tragic suicide of California teenager Adam Raine earlier this year. His parents filed a lawsuit alleging that ChatGPT provided detailed instructions on how to end his life.

In response, OpenAI has introduced new safety measures aimed at preventing similar incidents. These include enhanced parental controls, expanded access to crisis hotlines, automatic rerouting of sensitive discussions to safer AI models, and prompts encouraging users to take breaks during prolonged chats.

The company has also updated ChatGPT’s capabilities to better detect and respond to mental health emergencies. OpenAI stated that it is currently collaborating with over 170 mental health professionals to refine its system and minimize harmful or inappropriate responses.

What you should know

OpenAI’s latest findings underscore growing concerns about the mental health challenges emerging in the digital age.

With millions of users engaging deeply with AI tools, the data highlights the urgent need for responsible AI design and crisis intervention measures.

By working with mental health experts and tightening safeguards, OpenAI aims to make ChatGPT safer for vulnerable users while promoting emotional well-being across its vast global user base.